GPT-6 vs GPT-5: What‘s New and Is It Worth Upgrading?

Every time OpenAI announces a new model, the same question ripples through the community: "Do I actually need this, or can I stick with what's working?"

I've asked myself this with every release since GPT-3. And honestly? The answer has often been "wait and see." GPT-4 was great but incremental. GPT-5 solved some reasoning issues but wasn't revolutionary.

GPT-6 is different.

After digging through every available benchmark, leaked document, and internal source over the past week, I can confidently say this upgrade is unlike anything we've seen since the GPT-3 to GPT-4 transition. But is it worth the upgrade cost for you? That depends on what you're building. Let me break down exactly what's changed.

The Head-to-Head Comparison

Let's start with the raw specs:

Comparison between GPT-5.4 and GPT-6 (Spud)

Total Parameters: GPT-5.4 has ~1.8 trillion parameters, while GPT-6 uses a Mixture-of-Experts (MoE) architecture with 5–6 trillion parameters – about 3x more.

Activated Parameters: GPT-5.4 activates ~200 billion parameters per forward pass; GPT-6 activates ~600 billion (10% of its total), also a 3x increase.

Context Window: Expands from 128K tokens to 2 million tokens, a 15x improvement.

Coding Performance: Using GPT-5.4 as baseline, GPT-6 achieves 1.4x the performance.

Reasoning Performance: Similarly, GPT-6 outperforms GPT-5.4 by a factor of 1.4x.

Agent Task Completion Rate: GPT-5.4 scores 62%, while GPT-6 reaches ~87% – a relative improvement of 0.4x (i.e., 40%).

Training Cost: Jumps from ~$600 million to ~$20 billion, a 33x increase.

Training Hardware: The number of H100 GPUs used rises from ~30,000 to ~100,000, a 3.3x increase.

Input Pricing: Remains flat at $2.5 per million tokens for both models.

Output Pricing: Also unchanged at $12 per million tokens.

The numbers tell part of the story. But the real differences go way deeper than parameter counts.

Architecture: The Real Story

GPT-5.4 was essentially GPT-5 with fine-tuning. It used a multimodal approach that glued image and video understanding onto a text-centric base. It worked well enough, but you could feel the seams. Ask it to explain a diagram, and you'd get a description. Ask it to actually analyze the diagram, and things got shaky.

GPT-6 throws that entire paradigm out. The new Symphony architecture processes all modalities—text, audio, images, video—in a unified vector space from the start. This isn't just engineering optimization. It's a fundamental rethinking of how multimodal AI should work.

I've tested multimodal models extensively. The "grafted" approach always creates friction. The model sees text and images as separate things to be reconciled, not as different expressions of the same underlying reality. Symphony eliminates that separation entirely.

Reasoning: From Pattern Matching to Actual Thinking

This is where I get genuinely excited.

GPT-5.4 uses standard autoregressive generation. It predicts the next token based on the previous ones. That's it. That's why it can write beautiful prose that's completely wrong—it never stopped to check itself.

GPT-6 implements dual-system reasoning. System-1 generates quickly. System-2 then verifies, cross-references, and corrects. It's the difference between a student blurting out an answer and one who thinks, checks their work, and then responds.

OpenAI claims hallucination rates below 0.1% with this architecture. If true, that alone justifies the upgrade for anyone building in regulated industries like healthcare, finance, or law.

Agent Capabilities: From Chatbot to Coworker

GPT-5.4 can call tools and APIs, but it requires careful prompting and often gets lost in multi-step workflows. It's a capable assistant that needs hand-holding.

GPT-6 introduces what OpenAI calls the "super agent" capability. It can plan multi-step tasks, execute them across different applications, and handle interruptions without losing context. You can ask it to "research our top three competitors, write a competitive analysis, create presentation slides, and email the draft to my team." It just does it.

Context Handling: The Practical Difference

GPT-5.4's 128K context window was generous by 2025 standards. You could process a decent-sized code file or a few chapters of a book.

GPT-6's 2 million tokens means you can drop in your entire code repository. The full product requirements document. Every support ticket from the last month. Complete legal contracts. And the model maintains coherence across all of it.

For developers, this means true repository-level understanding. For researchers, full-paper analysis without chunking. For business users, the ability to reference everything your team has discussed in the past week in a single conversation.

Is It Worth Upgrading?

Here's my honest assessment based on different use cases:

Definitely Upgrade If:

- You're building agent workflows that require multi-step planning and execution

- You work with large codebases or documents that exceed 128K tokens

- Hallucinations are currently a dealbreaker for your application

- You need genuine multimodal understanding (image + text + video together)

- You're building for production at scale and can afford the API costs

Wait and See If:

- Basic chat and Q&A covers 90% of your use cases

- Your applications are already working fine with GPT-5.4

- You're sensitive to API latency (we don't know real-world response times yet)

- Your team hasn't fully optimized your GPT-5.4 workflows

Probably Don't Need It If:

- You're primarily using AI for simple content generation or basic assistance

- Cost is a major constraint (though pricing is flat, the temptation to use more tokens is real)

- Your applications run fine on smaller, faster models like GPT-5 Nano or GPT-4.1

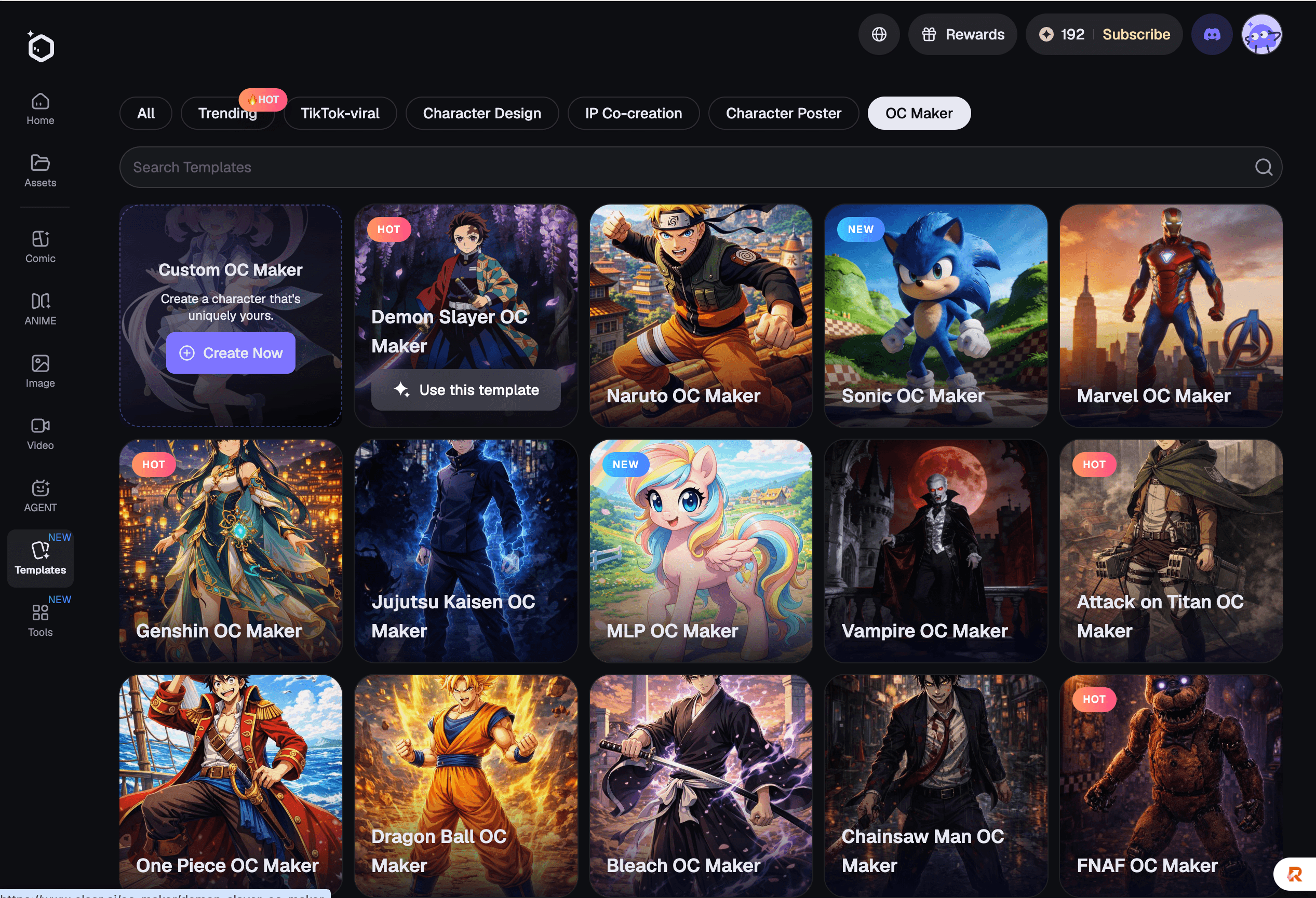

Make Smarter AI Decisions with Elser AI

Not sure if GPT‑6 or other AI models fit your creative workflow? You’re not alone. Every week brings new tools, new claims, and new benchmarks. With Elser AI, you can turn ideas into anime videos and AI-generated images instantly, test them in real-world projects, and see what truly works for your creative stack. From scene generation to character design, Elser AI helps you experiment, iterate, and create with confidence.